This article aims to show you one of the approaches to managing cron jobs execution in a multi-server setup. For instance, you have only one server with a Node.js application running on it. Also, you have a few “heavy” cron jobs running within different intervals of time. By heavy I mean accessing database, updating many entities based on some conditions, etc. What if your client would like to have 2, 3, or 10 servers?

If you keep executing those jobs on each of the servers this could have a big impact on overall performance of your application and database (high latency rate). And that’s not good. Let’s see what you can do using AWS.

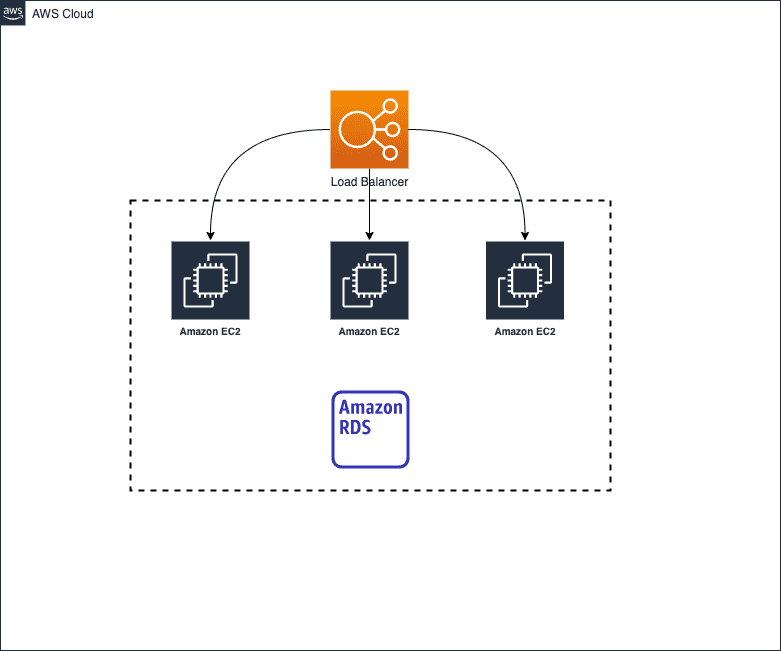

Infrastructure example

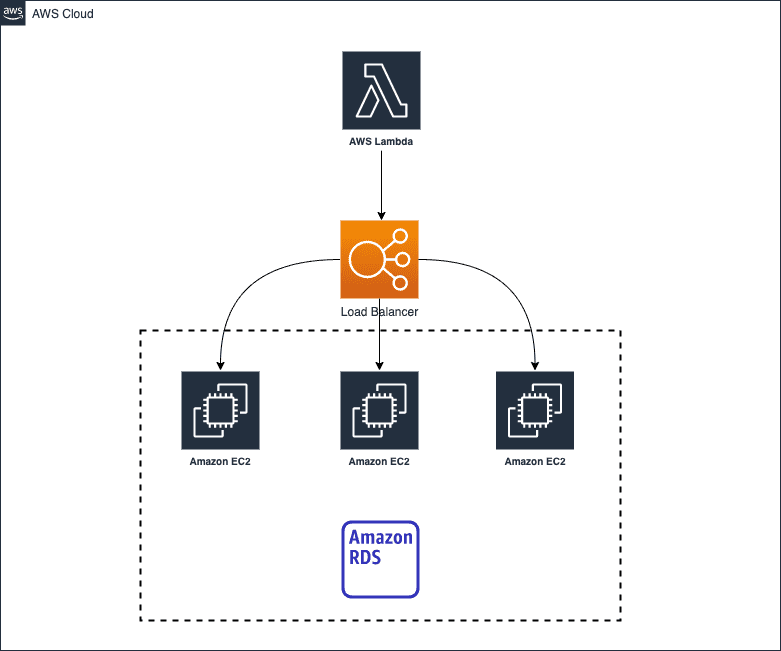

So, we have a 3 components infrastructure: a load balancer, three EC2 servers, and an RDS cluster. To solve our problem with cron jobs we need to have a fourth component that’ll manage cron job execution. And ta-daaaa 🥁

This component is a Lambda function that will trigger our server endpoint and execute the cron job. The point is to have a new secured API endpoint that will trigger job execution. This endpoint can receive an array of job names that need to be executed.

Let’s say you have configured this endpoint based on your web framework and programming language. A small hint on how to build a flow.

- Protect this route with a token or API key to prevent everyone from triggering it.

- Register all cron jobs when the server starts. In other words, store job functions somewhere in aka

CronSchedulersingleton service. With javascript you can do something like this:

const firstJob = () => {}

const secondJob = () => {}

const jobs = [firstJob, secondJob]-

In the endpoint handler check if registered jobs include provided job name. If so, execute it.

const jobExecutor = (jobs) => { const jobsToExecute = registeredJobs.filter(j => jobs.includes(j.name)); jobsToExecute.map(job => job()) }

Lambda example

import axios from 'axios';

import { isEmpty } from 'lodash';

const urls = process.env.ENDPOINTS?.split(',') as string[];

exports.handler = async function (event: { jobs: string[], token: string }) {

const { jobs, token } = event.params;

await Promise.all(

urls.filter(url => !isEmpty(url)).map(url => axios.post(url, { jobs, token }).catch(() => Promise.resolve()))

);

return Promise.resolve();

};As you can see, we have specified ENDPOINTS env variable which contains comma-separated endpoints. Some of you can say that we have 3 servers as previously with the same cron jobs. But the point is, we have a load balancer. So, our lambda will trigger the load balancer’s endpoint and the request will be redirected to only one server which is less busy right now. That means our cron jobs will be executed on one server only. It could be different server each time.

Trigger lambda

Okay, now we must have something that will trigger our lambda (too many triggers in this article 😅). AWS provides a great service called EventBridge. That’s all we need (trigger our lambda function within specific intervals).

So, open up AWS Cloud Console and jump to EventBridge. Click on Create rule button.

Specify schedule interval using cron expression. Under Targets section select Lambda function as target and also select function name.

The next step is to pass some input data to our function. Select Configure input -> Constant JSON. Related to the lambda example in the previous section pass this input:

{

"params":{

"jobs":[

"hello"

],

"token":"example"

}

}Remember to pass endpoint URLs into ENDPOINTS env variable under lambda configuration section (in Lambda service).

And we all set up! As you may figured out by now, we can create as many rules as we want using same lambda function as a target. This may be useful if we need to execute different jobs within different intervals of time. We designed our endpoint and lambda function to receive job names to have this ability in the first place.

So, do you need to execute other jobs but within different intervals? Create a new EventBridge rule. That’s it, mate!